Ask any software engineer with a decade of experience what it feels like to work in 2026, and you will hear the same tremor in the voice. It is not the fear of a machine becoming sentient. It is the quieter, more corrosive dread of becoming obsolete while still sitting at the keyboard. The technological cassandras will tell you to rejoice or to panic, depending on which cult they have sworn allegiance to. The pro-AI congregation preaches a gospel of frictionless creation where you whisper a wish to the machine and a fully formed application tumbles out. The anti-AI cult, meanwhile, is busy declaring a psychic death of craft, insisting that every line of generated code is a little soul-murder. Both are tedious. Both miss the geometry of the actual crisis.

The Geometry of the Crisis

To understand the plight of the experienced builder, you need to stop thinking in terms of code and start thinking in terms of spatial economics. Picture, if you will, a vast two-dimensional plane representing every possible software product that can be built. On this plane, every single point is a specific idea: a task manager for left-handed gardeners, a better OAuth library, a telehealth platform for nervous parrots. For as long as software has existed, this 2D space has had two very distinct types of terrain: dense clusters and wide, empty savannahs.

For an engineer who actually cared about the craft, who understood memory management, who knew why a hash table was not just magic, who could architect a system that didn’t collapse under its own weight: the savannahs were home. Those sparse, unoccupied points on the map were where you could graze in peace. You could see a thousand project ideas because the barrier to entry was not just having the idea, but possessing the sheer technical competence to execute it properly. Your skill was a machete that let you hack through the jungle into a clearing where only a handful of similarly skilled people could follow. The market might not have understood why your product didn’t crash under load, but it didn’t matter. Nobody could possibly know. The laymen only saw a product that simply existed where others did not.

This is what peak success looked like in 1990s. John Carmack, the engineering mastermind behind the hit game DOOM and Quake, made his career through optimisation hacks.

When the Machete Means Nothing

What artificial intelligence has done, or more specifically large language models strapped to code editors, is that it has bulldozed the jungle. It has given a machete to everyone with a credit card and a pulse. We have entered the age of the “vibe-coded,” 90%-good-enough solution. The great unskilled many, who previously could not hope to occupy the sparse, technically demanding spaces you inhabited, now can. They throw a hazy prompt at an AI, get a vaguely functional React component, slather it in Tailwind, patch the critical security holes by asking the AI “why is my database public?”, and ship. The product is a monolith of spaghetti logic with the structural integrity of wet cake, but it works. It occupies the point on the 2D plane.

And here is the gut-punch that leaves the seasoned engineer reaching for a drink: the market does not care. It never did, really, but now the disparity is grotesque. Building something twice as elegant, three times as performant, infinitely more maintainable, has virtually no return on investment when the competitor’s creaking scaffolding of a product got there first and captured the niche. The market settled for “good enough,” and AI has made “good enough” an absolutely trivial achievement. The savannah you once roamed is now a suburban mall, every square inch occupied by a cheap, AI-generated facade. The frustration sets in when you realize that your ability to build a thing beautifully is no longer a commercial moat, but a luxury good that nobody asked for.

The natural inclination of the anti-AI cult is to scream. To demand that the bulldozers be smashed, that we return to the dense, sweaty jungle of manual memory management and hand-rolled authentication. This is the digital equivalent of the 19th-century painter Paul Delaroche declaring painting dead upon seeing a photograph. It is a tantrum, not a strategy. The bulldozers are not going back in the box. So, what do you do when your home becomes a mall? You don’t cry, although some people do.

The Sparse Frontier

You find the really sparse frontier. You deliberately seek out the product spaces that are, by their very nature, resistant to the pattern-matching autocomplete engines we mislabel as intelligence. These are not merely projects that require “hard coding challenges.” An AI is already terrifyingly good at solving a neatly defined LeetCode or Codewars problem. The space you need to move into is one where the problem itself is an enormous, high-dimensional mess of ambiguity.

Think of it as an absurdly high-dimensional search space. To get to a viable product idea, you need to answer not three or four questions, but thousands of them, all interlocking, all poorly documented. There is no average solution, no median outcome on GitHub that an AI has scraped fifty thousand times. These are the boring spaces, the unsexy domains where the real-world constraints are so thorny that even a hallucinating LLM cannot weave a coherent narrative. It is the kind of project that takes months to build, not because the code is a symphony of algorithmic genius, but because you spend the first six weeks just figuring out what the right questions are. It requires a community with a shared, evolving taste. It requires a human face, a reputation, a sense of trust that an API endpoint cannot simulate. It requires taste: that deeply human, annoyingly unquantifiable ability to know which of a hundred possible directions is the one that doesn’t suck.

The Speed Trap

But there is a seductive gravity that tries to keep you in the mall, and it is exactly what makes the sparse frontier so repellent to those who have tasted the narcotic. AI coding works. That, in itself, is the trap. Not just sort-of-works-for-a-demo, but absolutely-incredible-I-built-a-whole-SaaS-in-a-weekend works. For a senior engineer, the first time you point an agent at a codebase and watch it stitch together a feature that would have taken you three days of deep, painfully laser-sharp focus, you feel a rush that is indistinguishable from cheating. It is not a tool swap from shovel to excavator, but something else entirely. The dopamine hits are immediate, the feedback loop viciously tight. You begin to resent the slow, laborious parts of the craft because the AI has shown you a world where you whisper architecture and watch a working pull request materialise. Dropping that for the methodical, manual work feels like trying to quit a narcotic and return to boredom.

And here is the cruel irony that turns the rush into a hangover: the very object of your addiction is also the mechanism that erodes your craft from the inside. When one engineer, armed with an agent, can produce ten times the code, the bottleneck merely shifts. You stop being a builder and become a verifier. Several studies, from independent surveys to Anthropic itself, shows (unsurprisingly) that the volume of AI-assisted code is saturating review pipelines. The worst part of the job, meaning the dreary, soul-grinding work of reading other people’s (or in this case, a machine’s) logic – swells to fill your entire day. You are no longer the architect with a machete; you are the exhausted gatekeeper signing off on a black box whose internal reasoning you can no longer realistically audit in detail. That addictive, effortless productivity rewrites your job description from “problem solver” to “rubber stamp”.

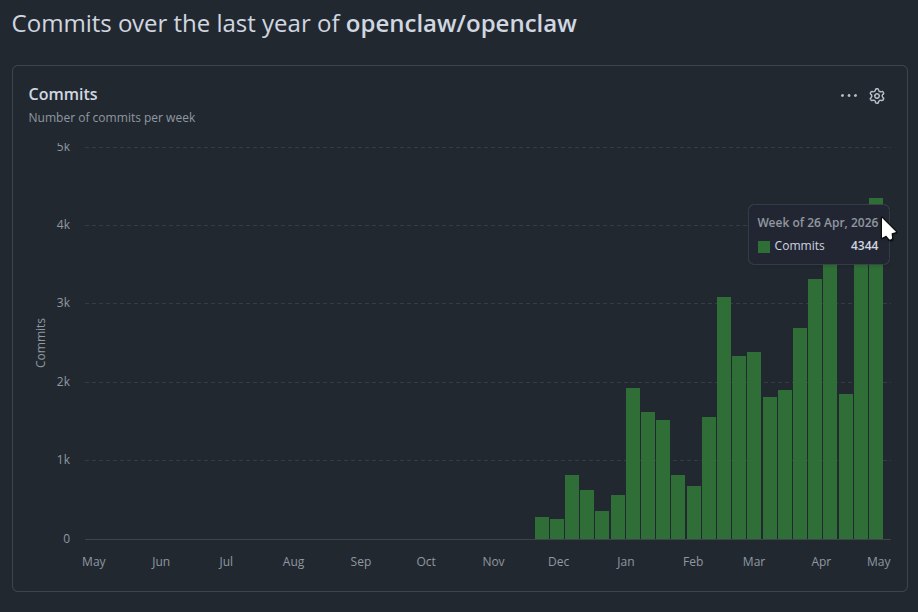

Openclaw openly embraces AI-assisted solutions. This is the pace at which commits are merged to it.

Escaping to the sparse frontier, then, is not merely a strategic retreat from market competition. It is a reclamation of the very reason some entered the field. The hardware lab, the robotics bay, the niche industrial automation cell, and so on. These are places where the feedback loop is not a text prompt but a physical arm moving in space, where the challenge is not policing an AI’s hallucinated API call but diagnosing why a sensor is misbehaving in humid weather. The narcotic of pure code generation has no purchase there, because the training data doesn’t exist, and the messy, analogue world refuses to be autocompleted.

If you are one of those people, moving to that frontier is how you stay an engineer who still feels proud of building something, instead of an addict presiding over a continent of mediocre code that you didn’t really write and don’t fully understand. Some of us might stay behind.

The Cartographer’s Trade

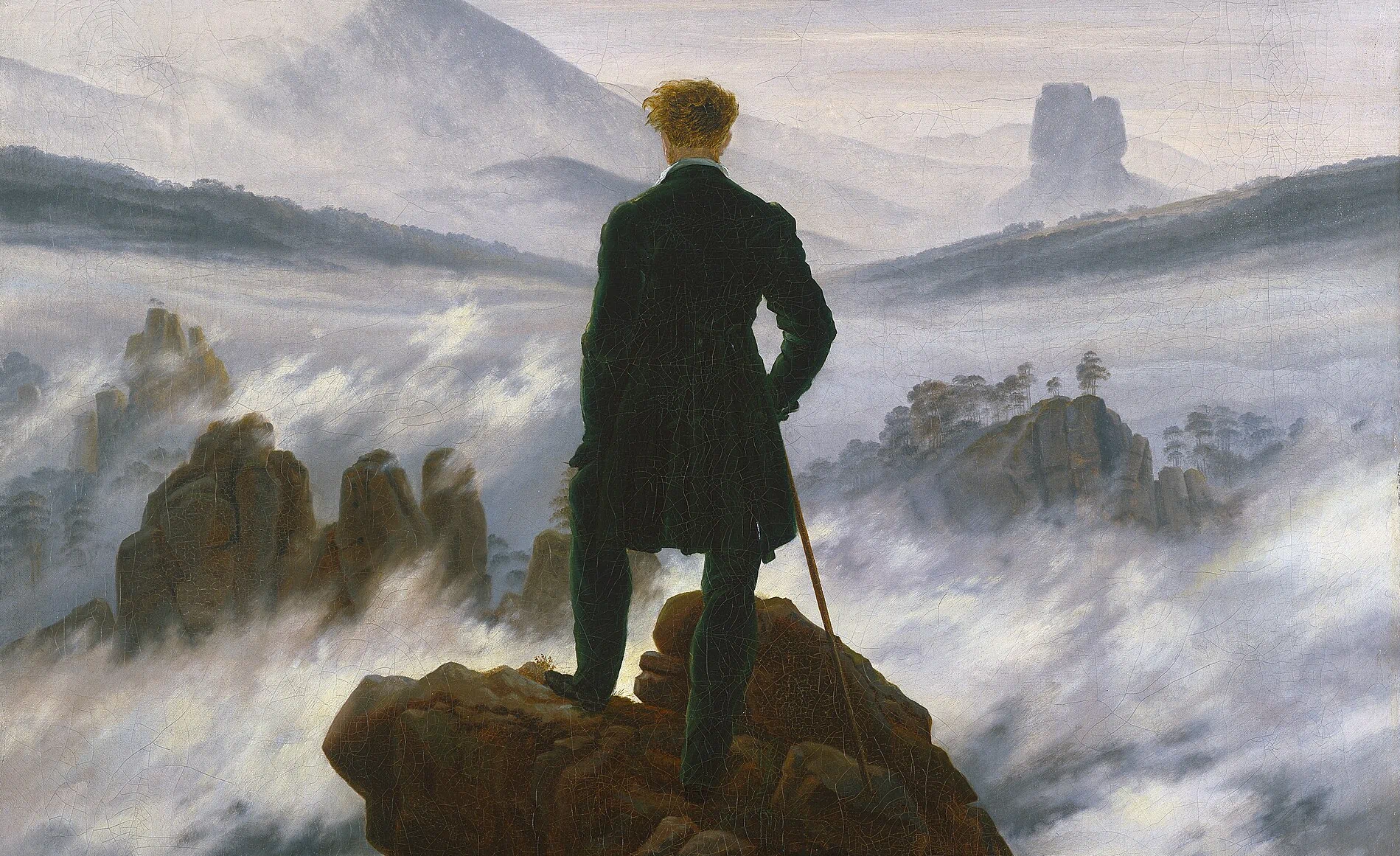

This is not a retreat from the engineering spirit. It is a purification of it. What we are witnessing is the forced evolution of the software engineer from a syntax-producing unit into a high-level decision architect. The axis of skill is shifting violently. The pride no longer comes from a clever bit-shifting trick or a meticulously optimised garbage collector. The new pride comes from navigating a labyrinth of constraints that no AI was trained on because the training data simply does not exist in any open-source repository, or even the Internet at all. You stop being a code factory and become a connoisseur of complexity, a cartographer of the uncharted.

Look at hardware. Look at robotics. These fields are the quintessential sparse frontier. The software that drives a physical machine in a specific, niche industrial setting is not something you can prompt out of Deepseek. The codebases that talk to proprietary sensors, that handle the physics of a specific gear ratio, that manage the real-time chaos of a dusty factory floor, are ghosts. They don’t live on Stack Overflow. They live in the minds of the engineers who cursed their way through building them. An AI cannot automate away the brutal physicality of a robot arm bending a bracket slightly wrong on a Tuesday because of ambient humidity. It cannot hallucinate a solution to a connector that only exists in a single supplier’s catalogue from 2003. The high-dimensional search space here involves software, yes, but also materials science, electrical noise, thermal dynamics, and the unholy mess of the real world. The unskilled horde cannot occupy this space because the “vibe” required is not in their prompting vocabulary. In a very sad age where most countries have well below 2.1 children per woman, rise in robotics seems increasingly inevitable and critical.

Take a look at these words by the engineering genius who is partially responsible for not just some of the greatest video games ever made, but some of the most impressive software engineering ever made:

[…] I will engage with what I think your gripe is — AI tooling trivializing the skillsets of programmers, artists, and designers.

My first games involved hand assembling machine code and turning graph paper characters into hex digits. Software progress has made that work as irrelevant as chariot wheel maintenance.

Building power tools is central to all the progress in computers.

Game engines have radically expanded the range of people involved in game dev, even as they deemphasized the importance of much of my beloved system engineering.

AI tools will allow the best to reach even greater heights, while enabling smaller teams to accomplish more, and bring in some completely new creator demographics.

Yes, we will get to a world where you can get an interactive game (or novel, or movie) out of a prompt, but there will be far better exemplars of the medium still created by dedicated teams of passionate developers.

The world will be vastly wealthier in terms of the content available at any given cost.

Will there be more or less game developer jobs? That is an open question. It could go the way of farming, where labor saving technology allow a tiny fraction of the previous workforce to satisfy everyone, or it could be like social media, where creative entrepreneurship has flourished at many different scales. Regardless, “don’t use power tools because they take people’s jobs” is not a winning strategy.

— John Carmack, 7th April 2025, Twitter

This is the antidote to the existential dread. The AI steamroller is not coming for you, it is coming for the malls. It is paving over the generic, the template-driven, the “how to centre a div” parts of our profession. The really, really boring parts. The pro-AI cult is outsourcing its brain to the steamroller and wondering why their dating app got hacked. The anti-AI cult is lying down in front of the steamroller and complaining that it’s ruining the view. The person who adapts is the one who looks at the flattened landscape, shrugs, and sets off on foot toward the jagged, dangerous, infinitely complex mountain range beyond the blacktop.

That mountain range is where the interesting work lives. It is where the noise of the crowd fades and the only signal left is the one you create by asking a thousand questions nobody has thought to ask yet. The software engineering spirit is not dead. It just no longer has time to stare at a syntax highlighter and feel proud of the colours. Bin that! It has a map to read instead.